Hello everyone

I want to train a LM but I get an error and I don’t understand what does it means.

First I preprocessed the corpora with this command

sudo nvidia-docker run -v $PWD/opennmt_data/:/home/data -d claudia_opennmt th preprocess.lua -data_type monotext -train /home/data/twe.europarl.nl.token.txt -valid /home/data/tgt-val-twe-nl.txt -save_data /home/data/datalm

This is the command I am running for training

sudo nvidia-docker run -v $PWD/opennmt_data/:/home/data -d claudia_opennmt th train.lua -model_type lm -data /home/data/datalm-train.t7 -save_model /home/data/demo-lm

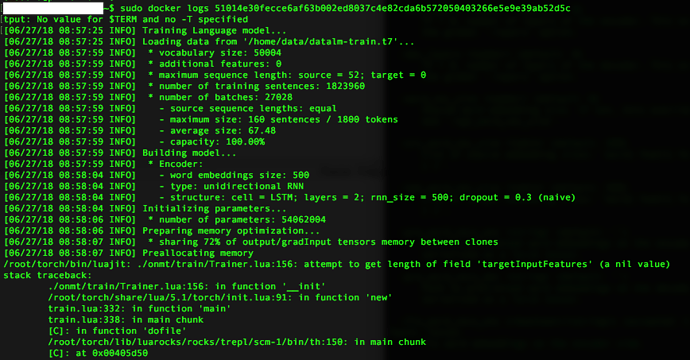

And this is the error I get

Can anyone help me?

Thanks!