Hello,

I’m training a certain language with an extremely small dataset. During training, once my early stopping conditions are met, I noticed there was this message:

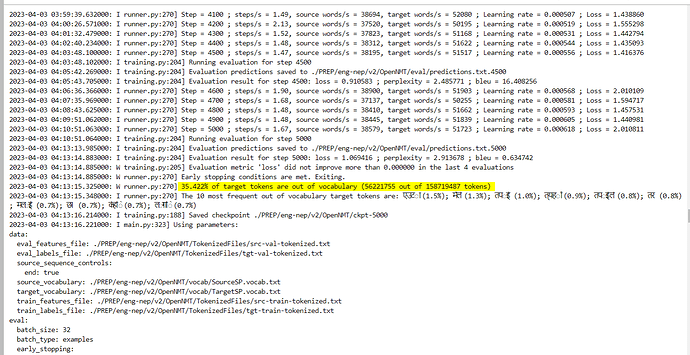

35.422% of target tokens are out of vocabulary

It’s the first time I ever notice this message. I’m not sure where that 35% come from. Does it mean that in my validation file 35% of the token doesn’t exist in my vocab list?

Based on my Sentence Piece config, I should be covering 100% of the vocab.

Thank you,

Samuel