Hi.

I’m trying to fine-tune the model for domain adaptation.

My steps:

- Trained a general model on a dataset of 57 million rows - training data and 3,000 rows - validation data;

- Prepared datasets for automotive theme. 4,500 lines - training, 1,500 lines - validation;

- Created a new directory for training, put there config.yml and model.py files identical to the general training.

- Left dictionaries unchanged.

- Set the path to the checkpoint of the general model, launched a new training.

Result:

- BLEU grows very fast during training on validation data. In just 3 epochs, the training reaches 80 units and continues to grow to almost 100 units.

- The quality of the translation becomes significantly worse in comparison with the general model (-15 Bleu).

What am I doing wrong?

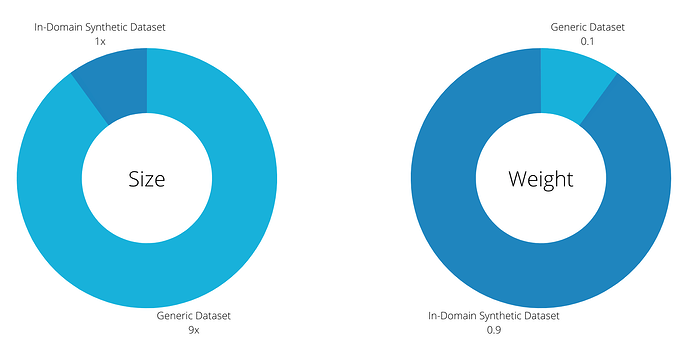

Is it right to fine-tune using only new data, or is it better to mix them with the original dataset and continue training?

Can you share general tips on fine-tuning models?

Thank you!