Hi all - any help is much appreciated!

I’m working to build a multilingal translation model. Had no issues during training and did recieve validation set scoring throughout so I’m not sure why I’m now having these issues.

ISSUES

The source sentence is always “None”. I saw another thread also missing the source sentences, but nothing there fixed my issue. I also have an additional issue though as I do not recieve the same number of predictions as source sentences I input. If I input 1000 sentences, I recieve 990-995 predictions (changes every few times I run). I’ve tried batching and reducing number of sentence in the source file, but the only time I receive a matching number of predictions is when I input 1 sentence at a time.

My test data is bpe encoded the same way as my training data. I’ve also swapped my test file for my training file, thinking maybe it was an issue without OOV tokens or formatting somewhere, but I continue to have the same issues.

COMMAND

onmt_translate -model model.pt -src src_data -output output_data -gpu 0 -batch_size 8 -batch_type sents -verbose

I’ve also tried with a higher batch size, batch_type tokens, and with both batch_size and _type removed from the command. No change in issues.

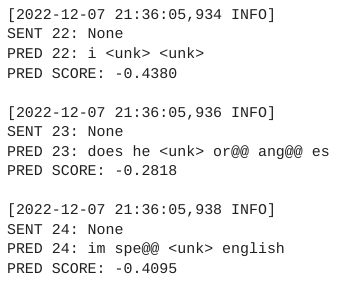

OUTPUT

MODEL TRAINING CONFIG

Data

data:

corpus_1: # Cornish-English

path_src: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_cor_eng_train_src.FIN

path_tgt: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_cor_eng_train_trg.BPE

transforms: [filtertoolong]

weight: 1

corpus_2: # English-Cornish

path_src: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_eng_cor_train_src.FIN

path_tgt: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_eng_cor_train_trg.BPE

transforms: [filtertoolong]

weight: 1

corpus_3: # Welsh-English

path_src: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_cym_eng_train_src.FIN

path_tgt: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_cym_eng_train_trg.BPE

transforms: [filtertoolong]

weight: 1

corpus_4: # English-Welsh

path_src: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_eng_cym_train_src.FIN

path_tgt: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_eng_cym_train_trg.BPE

transforms: [filtertoolong]

weight: 1

corpus_3: # Welsh-Cornish

path_src: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_cym_cor_train_src.FIN

path_tgt: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_cym_cor_train_trg.BPE

transforms: [filtertoolong]

weight: 1

corpus_4: # Cornish-Welsh

path_src: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_cor_cym_train_src.FIN

path_tgt: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/div_cor_cym_train_trg.BPE

transforms: [filtertoolong]

weight: 1

valid: # Cornish-Welsh/Welsh-Cornish

path_src: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/cor_cym_val_together_src

path_tgt: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/cor_cym_val_together_trg

transforms: [filtertoolong]

src_vocab: /proj/uppmax2022-2-17/mbruton/cor_cym_model/BPE/cor_cym_eng_combined.vocab

share_vocab: True

General opts

save_model: /proj/uppmax2022-2-17/mbruton/cor_cym_model/bpe_model/semisup/bpe_semisup

save_checkpoint_steps: 20000

valid_steps: 20000

train_steps: 200000

Batching

world_size: 1

gpu_ranks: [0]

num_workers: 4

batch_type: “sents”

batch_size: 8

batch_size_multiple: 8

vocab_size_multiple: 8

max_generator_batches: 2

accum_count: [4]

accum_steps: [0]

Optimization

model_dtype: “fp32”

optim: “adam”

learning_rate: 2

warmup_steps: 8000

decay_method: “rsqrt”

adam_beta2: 0.998

max_grad_norm: 0

label_smoothing: 0.1

param_init: 0

param_init_glorot: true

normalization: “sents”

Model

encoder_type: transformer

decoder_type: transformer

max_relative_positions: 20

enc_layers: 6

dec_layers: 6

heads: 8

hidden_size: 512

word_vec_size: 512

transformer_ff: 2048

dropout_steps: [0]

dropout: [0.3]