Hello,

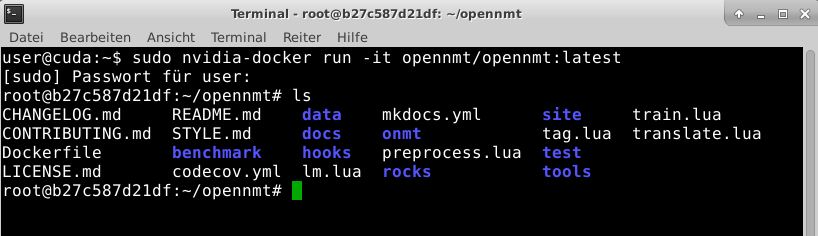

I am desperate. I have a new Geforce GTX 1070 with 8GB RAM and want to use it for OpenNMT -torch. My training command is: th train.lua -data Testdaten/Test_Update_de_en-train.t7 -save_model Testdaten/Test_Update_DE_EN-model -gpuid 1

I thougt this correct. But I get this result and I don’t know what can I do against this. There is something with cutorch in the error message,

Training wird gestartet

/home/user/torch/install/bin/luajit: ./onmt/utils/Cuda.lua:76: /home/user/torch/install/share/lua/5.1/trepl/init.lua:389: module ‘cutorch’ not found:No LuaRocks module found for cutorch

no field package.preload[‘cutorch’]

no file ‘/home/user/.luarocks/share/lua/5.1/cutorch.lua’

no file ‘/home/user/.luarocks/share/lua/5.1/cutorch/init.lua’

no file ‘/home/user/torch/install/share/lua/5.1/cutorch.lua’

no file ‘/home/user/torch/install/share/lua/5.1/cutorch/init.lua’

no file ‘./cutorch.lua’

no file ‘/home/user/torch/install/share/luajit-2.1.0-beta1/cutorch.lua’

no file ‘/usr/local/share/lua/5.1/cutorch.lua’

no file ‘/usr/local/share/lua/5.1/cutorch/init.lua’

no file ‘/home/user/.luarocks/lib/lua/5.1/cutorch.so’

no file ‘/home/user/torch/install/lib/lua/5.1/cutorch.so’

no file ‘/home/user/torch/install/lib/cutorch.so’

no file ‘./cutorch.so’

no file ‘/usr/local/lib/lua/5.1/cutorch.so’

no file ‘/usr/local/lib/lua/5.1/loadall.so’

stack traceback:

[C]: in function ‘error’

./onmt/utils/Cuda.lua:76: in function ‘init’

train.lua:300: in function ‘main’

train.lua:338: in main chunk

[C]: in function ‘dofile’

…user/torch/install/lib/luarocks/rocks/trepl/scm-1/bin/th:150: in main chunk

[C]: at 0x00405d50

Training fertig

For testing my GPU installation I tried this: sudo docker run --runtime=nvidia --rm nvidia/cuda:9.0-base nvidia-smi and I think it is ok.

±----------------------------------------------------------------------------+

| NVIDIA-SMI 384.130 Driver Version: 384.130 |

|-------------------------------±---------------------±---------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 GeForce GTX 1070 Off | 00000000:02:00.0 Off | N/A |

| 0% 42C P8 9W / 151W | 67MiB / 8112MiB | 1% Default |

±------------------------------±---------------------±---------------------+

±----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

±----------------------------------------------------------------------------+