I am using OpenNMT-py.

Everything in the http://opennmt.net/OpenNMT-py/quickstart.html works well.

Now I want to train on my data.

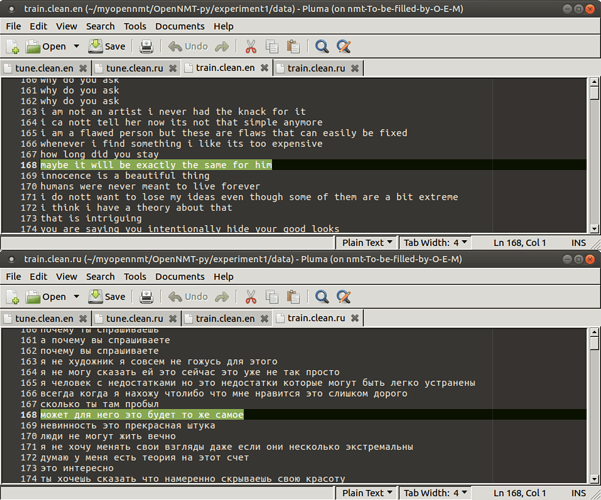

The files are clean, I even tried to make simple 30-lines files. I can see that there are no empty lines.

I tried to show any types of characters, but there is nothing except ‘\n’.

So, I am not sure what to try.

OpenNMT says:

[2019-11-18 19:08:16,547 INFO] Step 10000/100000; acc: 64.88; ppl: 6.94; xent: 1.94; lr: 1.00000; 11576/11541 tok/s; 365 sec

[2019-11-18 19:08:16,547 INFO] Loading dataset from data/demo.valid.0.pt

[2019-11-18 19:08:21,239 INFO] number of examples: 487196

Traceback (most recent call last):

File "/home/nmt/.pyenv/versions/main/bin/onmt_train", line 11, in <module>

load_entry_point('OpenNMT-py==1.0.0rc2', 'console_scripts', 'onmt_train')()

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/OpenNMT_py-1.0.0rc2-py3.7.egg/onmt/bin/train.py", line 200, in main

train(opt)

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/OpenNMT_py-1.0.0rc2-py3.7.egg/onmt/bin/train.py", line 86, in train

single_main(opt, 0)

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/OpenNMT_py-1.0.0rc2-py3.7.egg/onmt/train_single.py", line 143, in main

valid_steps=opt.valid_steps)

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/OpenNMT_py-1.0.0rc2-py3.7.egg/onmt/trainer.py", line 258, in train

valid_iter, moving_average=self.moving_average)

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/OpenNMT_py-1.0.0rc2-py3.7.egg/onmt/trainer.py", line 314, in validate

outputs, attns = valid_model(src, tgt, src_lengths)

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/torch/nn/modules/module.py", line 547, in __call__

result = self.forward(*input, **kwargs)

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/OpenNMT_py-1.0.0rc2-py3.7.egg/onmt/models/model.py", line 42, in forward

enc_state, memory_bank, lengths = self.encoder(src, lengths)

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/torch/nn/modules/module.py", line 547, in __call__

result = self.forward(*input, **kwargs)

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/OpenNMT_py-1.0.0rc2-py3.7.egg/onmt/encoders/rnn_encoder.py", line 74, in forward

packed_emb = pack(emb, lengths_list)

File "/home/nmt/.pyenv/versions/main/lib/python3.7/site-packages/torch/nn/utils/rnn.py", line 275, in pack_padded_sequence

_VF._pack_padded_sequence(input, lengths, batch_first)

RuntimeError: Length of all samples has to be greater than 0, but found an element in 'lengths' that is <= 0