Hello

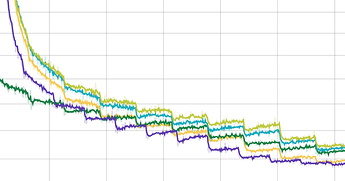

I’ve trained quite a few models on OpenNMT-py and this is the train ppl that I have:

Each point on a chart is an average PPL for 50 mini-batches.

The perplexity is not changing. During the epoch it even increases sometimes. On the start of each epoch you can see a significant drop in PPL.

Even though the perplexity goes down and validation perplexity also goes down on the next epoch, I still have a feeling that at the end of the day I’m losing in accuracy.

Could you please tell me where possible problems could be?