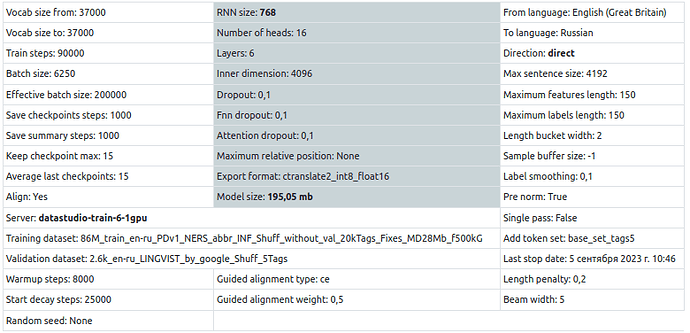

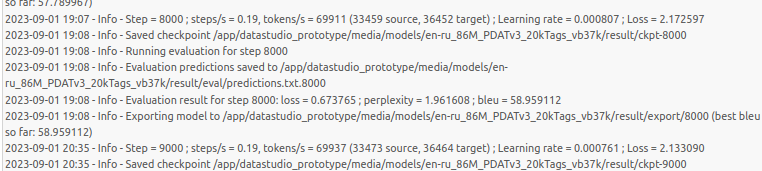

Why lr starts to decay after warmup_steps=8000, when start_decay_step is set to 15000?

Can you post the complete configuration file and the training command line?

We indeed see “Start decay steps” in this screenshot, but you should check that the parameter is actually set in the training configuration and in the correct place.

1 Like