Hello,

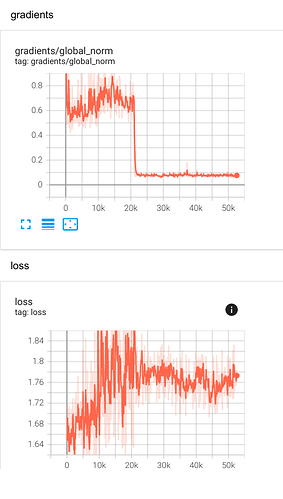

I tried the following, but I couldn’t understand what I am getting from the charts regarding the gradients global norm, it dropped so low but that didn’t reflect on BLEU results(I got a slight boost in BLEU), maybe I am doing something wrong?

I averaged the last two checkpoints and continued training with the averaged checkpoint, then I did the same thing over and over every ~10k steps:

(i.e) 10k + 20k > avg 20k training till 30k

avg 20k + 30k > avg 30k training till 40k

avg 30k + 40k > avg 40k training till 50k and so on

Here are the charts: