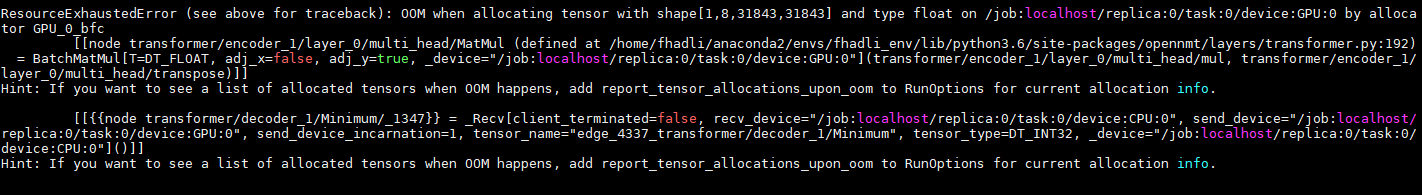

Dear OpenNMT community, I trained my model using Transformers from OpenNMT-tf and predict using “onmt-main infer” commands. But it is not working for a large file (More than 2000 sentences). All I got is ResourceExhaustedError. But I think it is not supposed to happen because I only do infer which supposed to need just a little resource. For more information, I want to do infer for 15Million sentences

Hi,

Looks like you have a very long sentence in your file. Sentences should probably not have more than 100 tokens which is the maximum length seen during the training (with the default settings).