Hi,

In some situation, we do NOT want some words (e.g: placeholders) to be translated.

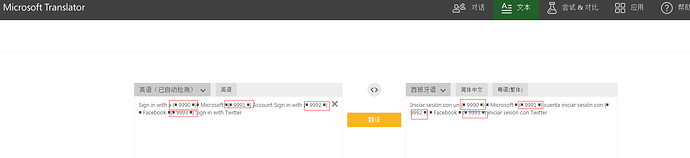

e.g: There some placeholders in below source segment (e.g: {9990}, {9991}…)

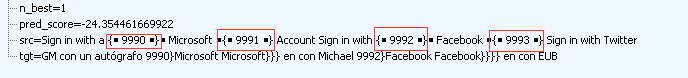

src=Sign in with a {■ 9990 ■}■ Microsoft ■{■ 9991 ■} Account Sign in with {■ 9992 ■}■ Facebook ■{■ 9993 ■} Sign in with Twitter

But the translation from NMT engine:Almost all placeholders are missing.

tgt=GM con un autógrafo 9990}Microsoft Microsoft}}} en con Michael 9992}Facebook Facebook}}}} en con EUB

When I fill same source segment to Microsoft Bing Translator, these placeholders can be kept as same as source in proper position.

Is there any way to keep such placeholder as same as source and appear in proper position in translation as source when call NMT?

Thanks.