when I was using a simple.pt file of the model then my model inference time was approx 10 sec but after converting the model in ctransalte2 model inference time is approx 20 sec how did I decrease this time? I am using ubuntu cpu server.

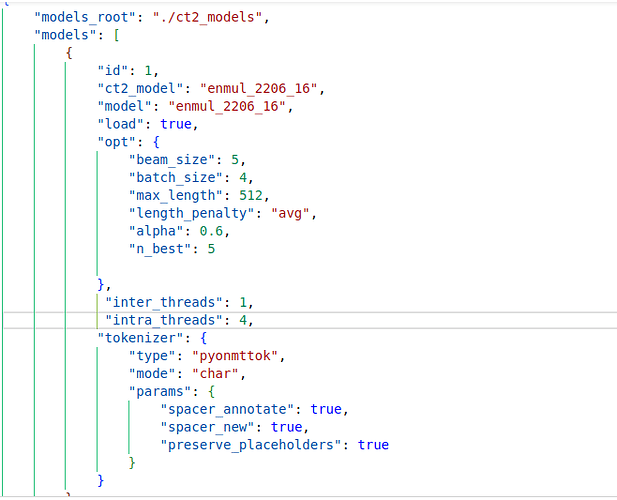

my model config file is

What are you measuring? 10 or 20 seconds seem a long time for a single request.

Also, how many CPU cores has this Ubuntu server?

Cpu information with 15 gb ram

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 46 bits physical, 48 bits virtual

Byte Order: Little Endian

CPU(s): 4

On-line CPU(s) list: 0-3

Vendor ID: GenuineIntel

Model name: Intel(R) Xeon(R) Platinum 8272CL CPU @ 2.60GHz

CPU family: 6

Model: 85

Thread(s) per core: 1

Core(s) per socket: 4

Socket(s): 1

Stepping: 7

BogoMIPS: 5187.81