Hello,

I’ve been facing the challenge of combining existing “external” models such as DeepL with my custom model trained (with low resource). The goal is mainly to fix in domain words and punctuation.

So I gave it a go this weekend and did a POC (Proof of concept) and so far the results are far beyond what I originally though.

This technic that I’m about to elaborate is based on this philosophy:

In the context of Evaluating a model with Ctranslate2, if the predict score is extremely low, it can be either because the model was not expose enough to understand well how to translate that specific token, or because the model knows really well how to and there is a mistake in the translation.

So I decided to identify those really low predict scores and then validate with a full translation from the model if at that same spot the model was certain. This enables me to categorize if a really low predict score was a mistake (or out of domain word) or just a lack of exposure from my custom trained model.

Here what I did:

- Translate Source in DeepL

- Evaluate the results with my custom model (Ctranslate2)

- Combine tokens so that you have a list of full words and keep the worse token predict score for each one of them. (ex: if you have the tokens [“Phy”, “lo”, “so”, “phy”] with respectively these predict scores: [-0.01, -0.02, -5.2, -0.02] would become: [“Phylosophy”] with [-5.2]

- You find the position of the first worse word in your string

- Use Ctranslate2 to translate with your custom model while providing the beginning of the sentence already translated (up to the position of the worse token) So we are asking Ctranslate2 to complete the sentence. (add an extra step here to call evaluate to get the predict score of the tokens)

- do some logic to compare the first word with a bad predict score from original translation vs custom training.

- Fix the original translation tokens by swapping from the custom train when the predict score of the custom train is really low.

- From here you need to iterate steps 4 to 7, by going through the words with a predict score lower than the threshold you identified as “bad”.

So you have 2 parameters you can adjust.

- threshold for tokens that you consider potential issues (used with the evaluation of the original translation)

- threshold for tokens you consider excellent (used with your custom model)

At this time I only made logic for a single word swap, but I’m going to experiment to support multi words.

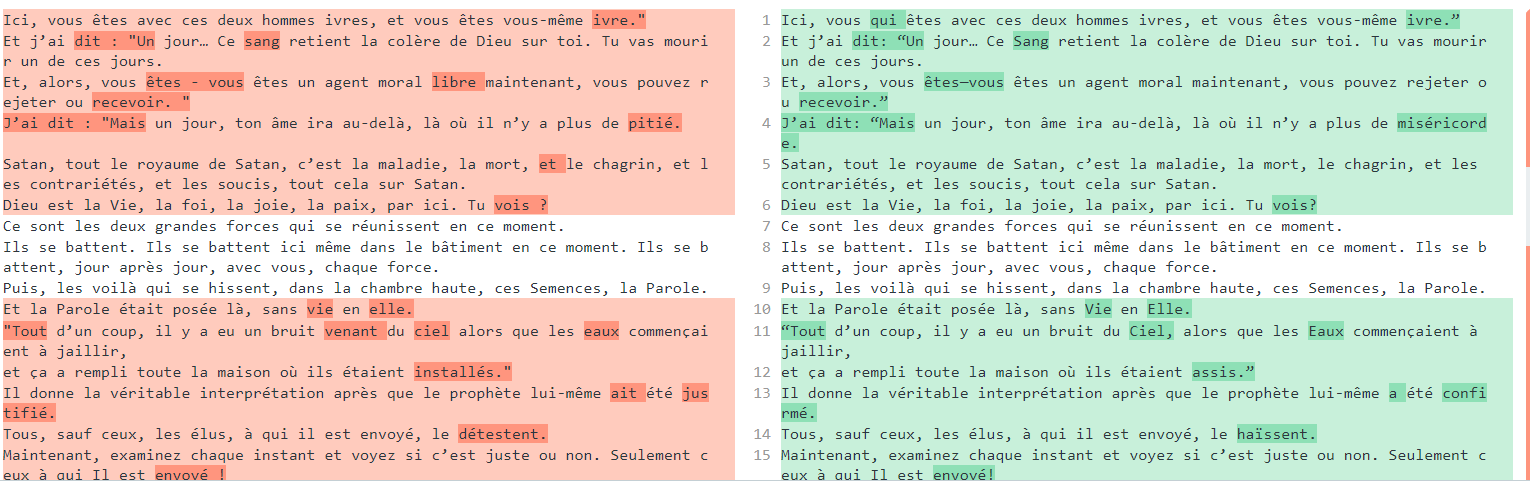

Here is a quick screenshot of few sentences that went into that process:

In this screenshot all the differences were a perfect fix.

CON of this technic:

The compute time. Calling Deepl takes 3 sec, all that extra work takes an additional 15 secs. This can be trim down with some parallel coding & also right now I need to translate then call evaluate to get the token predict scores which I could potentially get from the translate…

PRO of this technic:

- this mean that you can in domainize any text from any provider without having to do additional training or complicated data augmentation with external data for your model.

- You can potentially easily identify spots where the models is not so sure and provide suggestions just for those spots.

If anyone has additionnals questions/suggestions for me, let me know. I will post later on the results it has based on BLEU, WER, hLPOR and METEOR score. (probably in 2 weeks)

Best regards,

Samuel