What if I rerun onmt_build_vocab and then use the train_from flag? Am I expected to get an error?

To train a model, you need a vocab. Because parameters of the network are tied to a specific input/output index corresponding to a word/token. So, an existing model has a fixed vocab, in the sense that it expects a range of indices in input, and produces a range of indices in output.

You can technically pass a new vocab when using train_from. But your vocab will probably not be the same size and produce an error. And, if the vocab is the same size, the indices will probably not match so the model won’t train properly. (E.g. the index for “banana” would now be the index for “beach”, and your model would have to learn everything again.)

Note that build_vocab is merely a helper tool to prepare a vocab (basically a list of words/tokens), but the vocab passed to train could be built by any other tool as long as it’s in the proper format.

Thanks. That was really clarifying, I suspected it would be so but I wanted to be sure.

When finetuning on a new domain my approach is to use weighted corpora in training and a mix of in domain and out domain corpora for evaluation:

- Training data: out of domain (60%) and in domain (40%)

- Evaluation data: out of domain (50%) and in domain (50%)

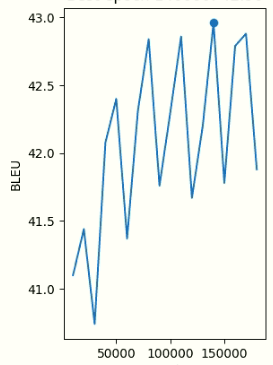

During the training process, BLEU keeps improving on the evaluation set:

Just to see what’s going on with the test, I periodically test the model on in domain data. What I noticed is that it seems to have in domain kwonledge until some point in the training which improves the baseline. However, from that point, even if the model improves BLEU on development data the model starts performing worse on in domain test. Any clues why I get this behaviour? Is it because I have out of domain data in the evaluation set? It happens with different percentages of weighted training data…

Thanks

use weighted corpora in training

Do you use the weight attributes in the training configuration or do you build your dataset beforehand by aggregating in-domain/out-of-domain data?

I use weights:

data:

out-domain:

weight: 6

in-domain:

weight: 4

Ok so there is no shuffling issue.

I don’t really know what might be happening here. Maybe your in-domain test set is not representative of your in-domain train data (or the opposite). Did you try weighting your in-domain dataset even more (like out-domain 1 and in-domain 10)?

My in-domain test is a subset of the in-domain training data. I should try 1-10 weights to see its behaviour.

Thanks

You mentioned it would be nice to add new tokens in vocabulary as a standalone script. Do you mena something similar to build_vocab.py? Apply transforms to the new corpus and update src and tgt counters and the crresponding vocabulary files?

I’m interested in implementing this feature.

EDIT: I’ve edited with minimal changes build_vocab.py to accept a new argument --update_vocab to update existing vocabulary files with new corpora. I still need to edit the training scripts.

Thanks

No, I meant this on the checkpoint level. Meaning we would convert a checkpoint trained on a set vocabulary to the same one but with a potentially different vocab.

This would require some manipulation of the model/optim parameters themselves.

Mmm, I see. So it is not required to update src and tgt vocab files? Aren’t they needed to set the known tokens? All tokens out of those files will be <unk>?

Can you give some pointers for the checkpoint manipulation?

Of course src and tgt vocab should be updated, but that’s quite trivial since the whole idea here is to update your vocab, so you’re supposed to know how you want to update it.

Here are a few pointers to what would be required:

- add the new tokens to the torchtext fields/vocab objects, retaining the original ids of existing tokens;

- create a new model with the new vocab dimensions (

build_base_model); - update the new model (and generator) with the older parameters, potentially the optim as well. Inner layers such as encoder/decoder should be transferable ‘as is’, but embeddings and generator layers would require some attention.

I think I’ve managed to get the first point (extending existing vocabularies via Torch vocab.extend()). However, I cannot find where are the vocab dimensions in the checkpoint and where should I modify them. In the build_base_model:

# Load the model states from checkpoint or initialize them.

if checkpoint is not None:

# This preserves backward-compat for models using customed layernorm

def fix_key(s):

s = re.sub(r'(.*)\.layer_norm((_\d+)?)\.b_2',

r'\1.layer_norm\2.bias', s)

s = re.sub(r'(.*)\.layer_norm((_\d+)?)\.a_2',

r'\1.layer_norm\2.weight', s)

return s

**checkpoint['model'] = {fix_key(k): v**

** for k, v in checkpoint['model'].items()}**

# end of patch for backward compatibility

model.load_state_dict(checkpoint['model'], strict=False)

generator.load_state_dict(checkpoint['generator'], strict=False)

I guess it is here where the checkpoint is loaded. Where are the vocabulary dimensions?

The vocab dimensions should only intervene in the embeddings as well as the generator.

I think if you naively update the vocab and pass a checkpoint with the old vocab you might encounter some dimension mismatch when loading the state_dicts in the section you quote.

What you probably want to do is to model.load_state_dict of only encoder and decoder layers (pop the embeddings related keys of your checkpoint['model']).

And then partially replace the embeddings parameters of your model (where the vocab matches), as well as those of the generator.

This is what I’ve done so far:

Extend model vocabulary with new words, appending new words at the end:

Old vocab: word1 word2 word3

New vocab: word1 word3 word4

Extended vocab: word1 word2 word3 + word4

New model’s embeddings are initialized as always, so update those embeddings with embeddings learned in checkpoint:

if model_opt.update_embeddings:

old_enc_emb_size = checkpoint["model"]["encoder.embeddings.make_embedding.emb_luts.0.weight"].shape[0]

old_dec_emb_size = checkpoint["model"]["decoder.embeddings.make_embedding.emb_luts.0.weight"].shape[0]

model.state_dict()["encoder.embeddings.make_embedding.emb_luts.0.weight"][:old_enc_emb_size] = checkpoint["model"]["encoder.embeddings.make_embedding.emb_luts.0.weight"][:]

model.state_dict()["decoder.embeddings.make_embedding.emb_luts.0.weight"][:old_dec_emb_size] = checkpoint["model"]["decoder.embeddings.make_embedding.emb_luts.0.weight"][:]

generator.state_dict()["0.weight"][:old_dec_emb_size] = checkpoint["generator"]["0.weight"][:]

generator.state_dict()["0.bias"][:old_dec_emb_size] = checkpoint["generator"]["0.bias"]

del checkpoint["model"]["encoder.embeddings.make_embedding.emb_luts.0.weight"]

del checkpoint["model"]["decoder.embeddings.make_embedding.emb_luts.0.weight"]

del checkpoint["generator"]["0.weight"]

del checkpoint["generator"]["0.bias"]

model.load_state_dict(checkpoint['model'], strict=False)

generator.load_state_dict(checkpoint['generator'], strict=False)

The code above assumes embedding lookup tables have the same order, as new words were appended to vocabulary:

Old embeddings: word1 (0, learned), word2 (1, learned), word3 (2, learned)

New embeddings: word1 (0, learned), word2 (1, learned), word3(2, learned), word4 (3, initialized as always)

Does this make sense?

Yes this looks quite okay to me. The important point being what you wrote:

The code above assumes embedding lookup tables have the same order

Also, you would need to make sure your del operations don’t delete the parameters you just updated though. Or is it running fine already?

del operations are deleting embedding weights from the checkpoint. Updated embeddings are assigned to the new model being generated and then load_state_dict loads the rest of the leraned parameters from the checkpoint to the new model. So the new embeddings are not deleted.

By the way, are you interested in a PR for this feature?

deloperations are deleting embedding weights from the checkpoint. Updated embeddings are assigned to the new model being generated and thenload_state_dictloads the rest of the leraned parameters from the checkpoint to the new model. So the new embeddings are not deleted.

I thought there could be some issues since your new parameters would refer to the ones from the checkpoint objects, but if it works that’s all good.

By the way, are you interested in a PR for this feature?

Sure, that would be interesting. You would need to make this quite robust to prevent any issue with potential id/dimension mismatch, order change, etc.

It would also probably be a good idea to add a functional test as well as an entry in the docs FAQ.

I guess order change or id/dimension mismatch is not an issue.

This is what the new training procedure would be:

- Run

build_vocabas usual. This would generate the vocabulary files for the new corpora. - Then in the training procedure:

- Load checkpoint (fields would be populated with the checkpoint’s vocab)

- Build fields for the new vocabulary as usual from the vocabulary files generated in step1.

- Extend checkpoint fields vocabulary with the new vocabulary fields (only appending new words at the end, as stated in Torch docs)

- Assign the extended vocabulary to the new models fields

- In

build_base_model, after loading the checkpoint’sstate_dicts, replace new model’s embeddings from 0 tolen(checkpoint.vocab_size)with checkpoint’s embeddings. It should be in the same order as in the extended vocabulary new words were appended at the end. - Remove embeddings parameters from checkpoint as new embeddings have been already added to the model

- Continue as usual

I guess you check the PR code before merging it, don’t you? Would you mind checking if something is missing from a whole picture perspective?

Furthermore, would you give me some guidelines on which functional tests would be necessary?

Your approach seems fine indeed. You can prepare a draft PR and I’ll have a look.

Tests : you can probably make some end to end test of the whole process with some toy vocab / model such as those defined here: