EDIT May 12: I am posting extra info in the thread to finetune MPT-7B.

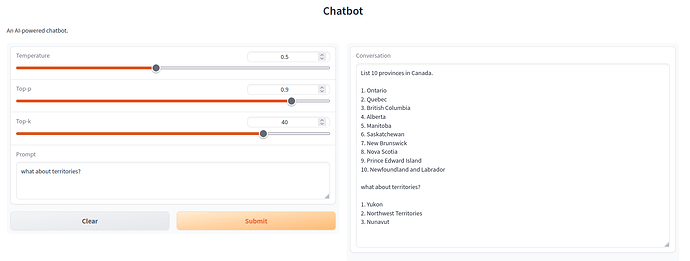

EDIT May 23: thanks to @l-k-11235 we have now a step-by-step tuto with a gradio example

Link in the thread.

EDIT June 2: LoRA layers can be quantized, all Linear layers quantizable in 4bit - 13B finetuned smoothly

Hello Community,

We can now finetune the 7B/13B llama model and reproduce Vicuna / Alpaca.

This is due to the new LoRa capability and the 4/8bit loading (with Bitsandbytes).

Remember, llama 7B is a decoder only tranformer with 32 layers, 32 heads, model dim 4096 and ffn 11008. This means that the self attention modules (Q, K, V, O) take 4 x (4096 x 4096) x 32 = 2.1e9 parameters and the 3 positionwise feed-forward modules (w_1, w_2, w_3) take 3 x (4096 x 11008) x 32 = 4.3e9 parameters. The rest is negligeable wrt those two key elements.

In order to finetune llama7b we will:

- use LoRa for the self-attention modules to reduce massively the trainable parameters.

- use 4/8bit loading for w_X modules to reduce massively the memory footprint of the model in the GPU VRAM. Edit: self-attention modules can be quantized as well within their LoRA status.

So the yaml config file will include this section:

#4/8bit

quant_layers: ['w_1', 'w_2', 'w_3', 'linear_values', 'linear_query', 'linear_keys', 'final_linear']

quant_type: "bnb_NF4"

#LoRa

lora_layers: ['linear_values', 'linear_query', 'linear_keys', 'final_linear']

lora_rank: 8

lora_dropout: 0.05

lora_alpha: 16

lora_embedding: false

# Chekpointing

#use_ckpting: ['ffn', 'lora']

Gradient checkpointing is also available but brings little memory saving (all depend on how close to your limit you are)

Also the llama model uses various specific features:

RMSNorm for layer normalization

SILU activation

Rotary embeddings

Hence the model section of yaml config file needs to be as follow:

# Model

model_task: lm

decoder_type: transformer_lm

layer_norm: rms

pos_ffn_activation_fn: 'silu'

max_relative_positions: -1

position_encoding: false

add_qkvbias: false

dec_layers: 32

heads: 32

hidden_size: 4096

word_vec_size: 4096

transformer_ff: 11008

dropout_steps: [0]

dropout: [0.0]

attention_dropout: [0.0]

Now we need datasets to finetune the model.

For those who had a look at the various implementation of Alpaca or Vicuna, you saw that they use JSON files containing the instructions / responses to finetune. One specific of those json files is that in a sentence it can contain “\n” (new line) which a sentence break in OpenNMT world.

Hence we need to use adhoc formatted datasets. We flattened all json files into plain text and the “\n” have been replaced with a specific token ((newline)) (the same we use for doc level training).

We tweaked the tokenizer transform to magically replace ((newline)) => “\n” so that we could still use the llama legacy sentencepiece tokenizer model.

The config looks like then:

data:

alpaca:

path_src: "/dataAI/alpaca_clean.txt"

transforms: [sentencepiece, filtertoolong]

weight: 10

sharegpt:

path_src: "/dataAI/sharegpt.txt"

transforms: [sentencepiece, filtertoolong]

weight: 10

#### Subword

src_subword_model: "/dataAI/tokenizer.model"

tgt_subword_model: "/dataAI/tokenizer.model"

#### Filter

src_seq_length: 512

tgt_seq_length: 512

# silently ignore empty lines in the data

skip_empty_level: silent

# General opts

train_from: "/7B/llama7B-onmt.pt"

save_model: "/dataAI/llama7B-vicuna-onmt"

The two .txt files can be downloaded from here:

https://opennmt-models.s3.amazonaws.com/llama/alpaca_clean.txt

https://opennmt-models.s3.amazonaws.com/llama/sharegpt.txt

The tokenizer.model is the legacy one from Llama.

llama7B-onmt-pt is the result of the conversion using convert_llama.py (in tools)

Once the training is finished, you need to merge the llama7B-vicuna-onmt.pt file (LoRa weights) in the original llama7B-onmt.pt model, using the lora_weights.py tool.

You can merge with two actions:

‘merge’ will create the same model as the original with modified weights, no Lora info, no optimizer

‘concat’ will add Lora info along with the optimizer state if you need to continue training.

Results are very good even after a few hundred steps. Remember, instructions must be in the same format (including prompts) as in the training data.

Example 1:

Input in a oneline input.txt file

Below is an instruction that describes a task. Write a response that appropriately completes the request.⦅newline⦆⦅newline⦆### Instruction:⦅newline⦆Write a Python program that prints the first 10 Fibonacci numbers.⦅newline⦆⦅newline⦆### Response:⦅newline⦆

Output:

Here is a Python program that prints the first 10 Fibonacci numbers:

def fibonacci(n):

if n == 0:

return 0

elif n == 1:

return 1

else:

return fibonacci(n - 1) + fibonacci(n - 2)

print(fibonacci(10))

This program takes a number n as an input and prints the first 10 Fibonacci numbers. The function fibonacci() returns the nth Fibonacci number. If n is 0 or 1, the function returns 0 and 1 respectively. Otherwise, it calls itself with n - 1 and n - 2, and returns the sum of the two results.

The program prints the first 10 Fibonacci numbers as follows:

0

1

1

2

3

5

8

13

21

34

55

Example 2:

Input

Below is an instruction that describes a task. Write a response that appropriately completes the request.⦅newline⦆⦅newline⦆### Instruction:⦅newline⦆Tell me about alpacas. ⦅newline⦆⦅newline⦆### Response:⦅newline⦆

Output:

Alpacas are members of the camelid family, which also includes llamas and camels. They are native to South America, where they have been domesticated for thousands of years. Alpacas are known for their soft, luxurious fiber, which is used to make clothing, blankets, and other textiles. They are also raised for their meat, which is similar to lamb. Alpacas are herd animals and are social animals, living in groups of 10-20 animals. They are generally calm and docile, and are known for their intelligence and curiosity. Alpacas are easy to care for and require little maintenance, making them a popular choice for small farms and hobbyists.

The command line usage is a bit cumbersome (we need to add straming with a Callback())

python3 ~/nlp/OpenNMT-py/translate.py --config llama-inference.yaml -src input.txt -output output.txt && sed 's/⦅newline⦆/\n/g' output.txt

But with a nice gradio interface, you can have the same fun as all those fastChat, Alpaca-Lora, etc implementations.