Hi,

As already mentioned I’m spending a lot of time on Dutch-English/English-Dutch models. On the Dutch2Eng side I’ve eliminated UNK’s by using a back-off dictionary and applying language-dependent compound splitting rules to the input. However, in the Eng2Dutch direction I am still finding an inability to deal properly with English compound noun phrases, e.g. “chief executive” despite this noun phrase appearing countless times in the training data. Incidentally, the demo system on this website also fails to handle “chief executive”. My system usually omits the first element of the noun phrase, e.g. “senior researcher” -> “researcher”.

This is an embarrassing failure in what is otherwise really nice translation output. Any suggestions to improve the situation would be welcome

Terence

Hello Terence, did you use compound splitting in target too?

There are several things you can try:

-

use translation length and coverage normalization options (no need to retrain anything): http://opennmt.net/OpenNMT/translation/beam_search/#length-normalization

-

train a model with coverage model in attention - this is not fully final but testable here

from our experiments the nn10 coverage model works well for some languages

we would be interested to know if one of these options works for you.

Hello Jean, Terence,

The paper from Wu is not very affirmative regarding these 3 nomalizations.

For instance there is a wide range of alpha giving the same results.

Do you guys have any feedback and benchmark within the onmt scope ?

I will try myself but interested to know in what configuration (languages, network size, norm parameters)

it helps

Cheers.

From our tests, length normalization is working well and quite systematically (range 1 to 3). the other ones are less clear (and we have some doubt on the results reported for coverage normalization). I don’t have a systematic benchmark that I can share, but look forward seeing your results  ! It is particularly clear on long sentences - and you can use the patched version of

! It is particularly clear on long sentences - and you can use the patched version of multi-bleu.perl from here: https://github.com/OpenNMT/OpenNMT/tree/master/benchmark/3rdParty with the option -length_analysis to see quantitatively the impact.

when you say length normalization with a range of 1 to 3, is this 1/alpha in the Wu paper ?

Hello Jean,

I only used compound splitting on the source side. I intend to try out your suggestions but think they require the latest ONMT release so I’ll need to wait for a quiet moment.

Terence

Investigating this further (without yet trying the recommended options) I find that ‘chief executive’ occurs only only 80 times in a corpus of approx 5.5 M sentences.The term ‘project leader’ occurs 1058 times and is perfectly translated as “projectleider” in every test sentence I submit. I wonder how many times a phrase has to be seen to be learned.

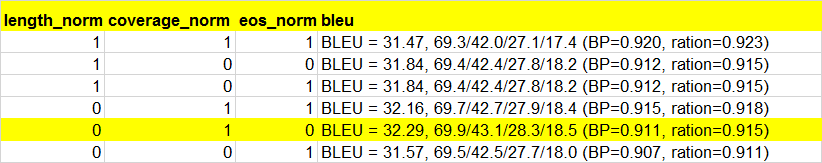

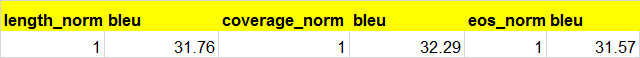

I have some about length and coverage normalization test result for you.

Ori BLEU = 31.43, 69.5/42.3/27.6/17.9 (BP=0.906, ration=0.910)

set all options:

English to Chinese,18m sentences.

I now have some results from tests:

Language direction: Eng - Dutch

Model configuration:

rnn_size = 600

layers = 4

brnn = true

Trained on 5M sentences (Europarl + proprietary data)

Test set: 2.2 K sentences (Europarl)

L_N 3 + EOS_N 3 = 28.76

LN 3 + EOS 2 = 28.75

LN 3 + EOS 1 = 28.71

LN 3 = 28.66

LN 2 = 28.63

LN 1 = 28.55

Any combination with -coverage_norm took the BLEU down below 20.00 and in on case

to 5.82.

With @netxiao’s top combination of -length_norm 0 -coverage_norm 1 -eos_norm 0 I only achieved 18.92.