Hi, has someone tried to train a Japanese-English model with OpenNMT? Do you have any recommendation or advice for the choice of training parameters (number of layers) or preprocessing steps for this language pair? Thanks in advance

I’ve trained a English->Japanese engine, the BLEU score is 46.

I used 1.45 millions of sentences with 5 layers and batch_size 1000 during the training procedure.

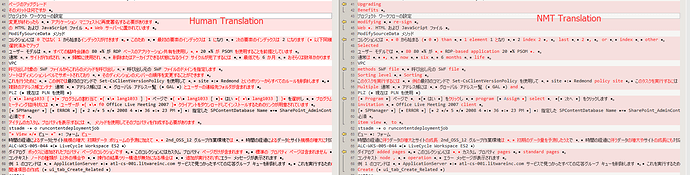

After a pilot translation with the trained engine, it seems the result is unaccptable at all. Many unknow, mistranslations… See the comparison result as reference:

Left: Human translation

Right: NMT translation

I also have some other trained engines with same parameters, e.g: English->German, English->Spanish…, the translation results are general good.

Not sure if there some special steps for processing Asian language (Chinese, Janpanese, Korean) of NMT.

Thank you for sharing this. Is your models Japanese-English or English-Japanese? Did you try segmenting the japanese sentences into word tokens before applying preprocess.lua?

Hello @liluhao1982, I am copying also @satoshi.enoue who did a lot of trainings for English<>Japanese. Can you also tell us more about your training corpus? It seems to be technical, isn’t it?

English -> Janpanese, I’ve ran tokenize.lua for both source segments and target segments.

Thanks for your response, yes, it is technical content. The segments for training is ~1.45 millions. Language pair is english -> Japanese. I’ve ran tokenize.lua for both source segments and target segments before applying preprocess.lua.

As I used the default tokenize.lua, I guess this maybe a problem, for asian/arab languages, i guess there special tokenizer should be applied, right?

Yes I think this is a necessary step for word-level NMT when dealing with those languages

Japanese and Chinese do not have a space between words, So you need to use a morphological analyzer to tokenize those languages by putting spaces between words. Often used include mecab, juman, kytea, etc.

There is also a good data processing tutorial on Workshop on Asian Languages http://lotus.kuee.kyoto-u.ac.jp/WAT/baseline/baselineSystems.html#data_preparation.html .

Below is an example Japanese tokenization using mecab.

$ echo “次の部分はASCII characterですが、他は日本語です。” | mecab -O wakati

次の 部分 は ASCII character です が 、 他 は 日本 語 です 。

For detokenization, you can use below Perl one liner described in WAT page above.

$ echo “次の部分はASCII characterですが、他は日本語です。” | mecab -O wakati | perl -Mencoding=utf8 -pe 's/([^A-Za-zA-Za-z]) +/${1}/g; s/ +([^A-Za-zA-Za-z])/${1}/g; '

次の部分はASCII characterですが、他は日本語です。

We are thinking of implementing tokenizer plug-in for Asian languages specifically Japanese and Chinese along with the stock tokenizer. We have shortlisted kuromoji for Japanese and ICU Tokenizer for Chinese.

It will be very helpful if you have any other suggestions for the tokenizer. And also any suggestion for integration of these tokenizers in the existing code are welcome.

@satoshi.enoue @guillaumekln

Hi,

Is your plan to just integrate existing tokenizers in the code base? The OpenNMT tokenization is optional and can be replaced by any other tools so it does not seem to be necessary.

How did you calculate the bleu score ? Can you please share the script ?

You just need to get the multi-bleu.perl script:

or refer to:

Hi,

I recommend to use sentencepiece, which is an unsupervised text tokenizer and detokenizer for neural machine translation. It extends the idea of byte pair encoding to an entire sentence to handle languages without white space delimiters such as Japanese and Chinese.

If you can read Japanese, there is a good tutorial by the author, who is also known as the author of mecab, one of the most popular Japanese morphological analysis software.

Hi guys,

If somebody has trained a engine from Japanese to English?

I’ve trained a engine from English To Japanese successfully (After our review evaluation, the feedback is positive).

Now I try to train another engine from Japanese to English with same corpus just use Japanese corpus as Source, English corpus as Target (All corpus have been tokenized), it seems PPL is high (>30) and I use some epochs to predict, but they can’t predict any translation, all output are unk.

Any suggestion is much appreciate.

Hi @liluhao1982, yes we have trained Japanese to English models and there is nothing very different for this language pair. As usual the key elements for a new language pairs are the size of the corpus and the tokenization you are selecting. Are you sure there is no error in the corpus preprocessing (for instance mixing source and target)? As a sanity check, look at the size of your vocab, the ratio unknown words in source and target, the sentence size distribution metrics you get in the preprocess.

Thanks Jean.

I found the problem now, the segments are misaligned after tokenization by default tokenizer in OpenNMT which is really strange.

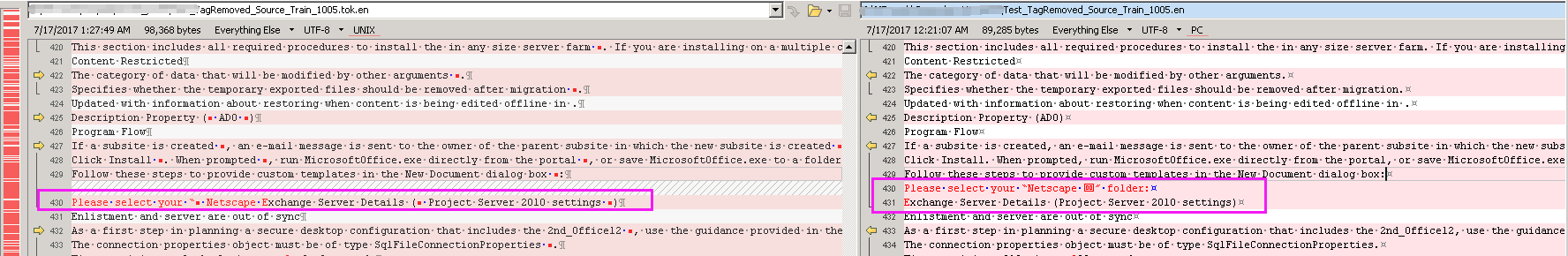

Row 430 and 431 are merged to one sentence after tokenization for my source file, but there is no such change in my target file during tokenization, so misalignment appear.

Left panel: It is the merged segment after tokenization

Right panel: It is the original segments before tokenization

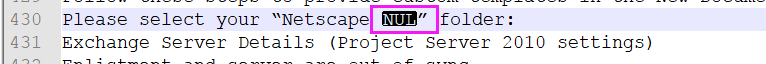

I found there is a special character in my source (Row 430), it’s unicode is \u0020, as it is not allowed to upload other format of file in the forum, I can’t share the source files with you.

Could you please double check if the default tokenizer has issue to process such special chars? I mean why it will merge segments.

Thanks.

See this related issue:

Thanks, I located the affected segment but it took much time my side. If tokenize.lua can throw a warning with affected strings or row # when it try to remove/merge segments that will be great. It will save much time for user to locate the affected strings if corpus is huge.

By the way, as I know, rest_translation_server.lua will first tokenize the input and detokenize the output automatically, but if input is Japanese, output is English, if the default tokenize will work? I mean if the default tokenizer can tokenize Japanese correctly.

During my training, I use Mecab to tokenize Japanese corpus.

We should add an option to disable tokenization when using the rest server.

In any case, you should do the tokenization before sending the request. You can already test that right now as it is likely the default tokenization will not split the sentence more.