portia

May 14, 2019, 7:36am

1

Hi,

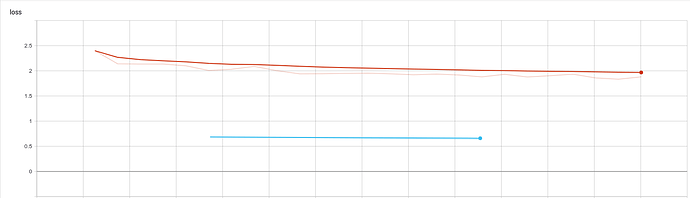

I’ve been trying to understand the eval loss, but have some doubts about it.

Why is there so much difference between the training loss and eval loss? Aren’t they the same metric?

Hi,

Can you describe the training configuration you are using? Most likely the difference comes from label smoothing which is only used for training.

portia

May 14, 2019, 8:09am

3

Sure.

data:

eval_features_file: indomain_enfr_en_training_set_val.txt

eval_labels_file: indomain_enfr_fr_training_set_val.txt

source_words_vocabulary: gen_enfr_en_vocab.txt

target_words_vocabulary: gen_enfr_fr_vocab.txt

train_features_file: indomain_enfr_en_training_set_train.txt

train_labels_file: indomain_enfr_fr_training_set_train.txt

eval:

batch_size: 32

eval_delay: 1200

exporters: last

infer:

batch_size: 32

bucket_width: 5

model_dir: run_indomain_bigbpe64mix_enfr/

params:

average_loss_in_time: true

beam_width: 4

decay_params:

model_dim: 512

warmup_steps: 4000

decay_type: noam_decay_v2

label_smoothing: 0.1

learning_rate: 2.0

length_penalty: 0.6

optimizer: LazyAdamOptimizer

optimizer_params:

beta1: 0.9

beta2: 0.998

score:

batch_size: 64

train:

average_last_checkpoints: 5

batch_size: 3072

batch_type: tokens

bucket_width: 1

effective_batch_size: 25000

keep_checkpoint_max: 5

maximum_features_length: 100

maximum_labels_length: 100

sample_buffer_size: -1

save_summary_steps: 100

train_steps: 500000

portia:

label_smoothing: 0.1

There it is. So this is expected.

portia

May 14, 2019, 8:15am

5

All right,

I’ll go have a look what label smoothing is.

Thanks very much for your help!