Hello there,

A few months ago i trained an english-hungarian nmt model with moderate success.

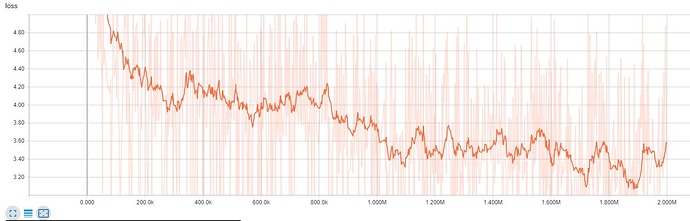

Last week I tried to train a model with beam_search and replace_unk enabled but the inference outputs are exponentially worse than the previous model. (I removed the word cat from vocab to try out replace_unk feature.)

I trained the model on 4 GPU-s with the following params:

onmt-main train --model=... --config=... --num_gpus=4 --gpu_allow_growth

Here is the model config python file:

Summary

import opennmt as onmt

def model():

return onmt.models.SequenceToSequence(

source_inputter=onmt.inputters.WordEmbedder(

vocabulary_file_key="source_words_vocabulary",

embedding_size=1024),

target_inputter=onmt.inputters.WordEmbedder(

vocabulary_file_key="target_words_vocabulary",

embedding_size=1024),

encoder=onmt.encoders.BidirectionalRNNEncoder(

num_layers=8,

num_units=800,

reducer=onmt.layers.ConcatReducer(),

cell_class=tf.contrib.rnn.LSTMCell,

dropout=0.3,

residual_connections=False),

decoder=onmt.decoders.AttentionalRNNDecoder(

num_layers=8,

num_units=800,

bridge=onmt.layers.CopyBridge(),

attention_mechanism_class=tf.contrib.seq2seq.LuongAttention,

cell_class=tf.contrib.rnn.LSTMCell,

dropout=0.3,

residual_connections=False))```

Here is the full config yaml file with the unused lines commented out:

Summary

# Do not use this file as is! It only lists and documents available options

# without caring about their consistency.

# The directory where models and summaries will be saved. It is created if it does not exist.

model_dir: 0817_14_catless1

data:

# (required for train_and_eval and train run types).

train_features_file: train.en-hu.en

train_labels_file: train.en-hu.hu

# (required for train_end_eval and eval run types).

eval_features_file: test.en-hu.en

eval_labels_file: test.en-hu.hu

# (optional) Models may require additional resource files (e.g. vocabularies).

source_words_vocabulary: vocab_untok_catless.en

target_words_vocabulary: vocab_untok_catless.hu

# Model and optimization parameters.

params:

# The optimizer class name in tf.train or tf.contrib.opt.

#optimizer: AdamOptimizer

optimizer: GradientDescentOptimizer

# (optional) Additional optimizer parameters as defined in their documentation.

#optimizer_params:

# beta1: 0.8

# beta2: 0.998

learning_rate: 1.0

# (optional) Global parameter initialization [-param_init, param_init].

param_init: 0.1

# (optional) Maximum gradients norm (default: None).

clip_gradients: 5.0

# (optional) Weights regularization penalty (default: null).

regularization:

type: l2 # can be "l1", "l2", "l1_l2" (case-insensitive).

scale: 1e-4 # if using "l1_l2" regularization, this should be a YAML list.

# (optional) Average loss in the time dimension in addition to the batch dimension (default: False).

average_loss_in_time: false

# (optional) The type of learning rate decay (default: None). See:

# * https://www.tensorflow.org/versions/master/api_guides/python/train#Decaying_the_learning_rate

# * opennmt/utils/decay.py

# This value may change the semantics of other decay options. See the documentation or the code.

decay_type: exponential_decay

# (optional unless decay_type is set) The learning rate decay rate.

decay_rate: 0.7

# (optional unless decay_type is set) Decay every this many steps.

decay_steps: 70000

# (optional) The number of training steps that make 1 decay step (default: 1).

decay_step_duration: 1

# (optional) If true, the learning rate is decayed in a staircase fashion (default: True).

staircase: true

# (optional) After how many steps to start the decay (default: 0).

start_decay_steps: 700000

# (optional) Stop decay when this learning rate value is reached (default: 0).

minimum_learning_rate: 0.0001

# (optional) Type of scheduled sampling (can be "constant", "linear", "exponential",

# or "inverse_sigmoid", default: "constant").

scheduled_sampling_type: constant

# (optional) Probability to read directly from the inputs instead of sampling categorically

# from the output ids (default: 1).

scheduled_sampling_read_probability: 1

# (optional unless scheduled_sampling_type is set) The constant k of the schedule.

scheduled_sampling_k: 0

# (optional) The label smoothing value.

label_smoothing: 0.1

# (optional) Width of the beam search (default: 1).

beam_width: 5

# (optional) Length penaly weight to apply on hypotheses (default: 0).

length_penalty: 0.2

# (optional) Maximum decoding iterations before stopping (default: 250).

maximum_iterations: 200

# (optional) Replace unknown target tokens by the original source token with the

# highest attention (default: false).

replace_unknown_target: true

# Training options.

train:

batch_size: 20

# (optional) Batch size is the number of "examples" or "tokens" (default: "examples").

batch_type: examples

# (optional) Save a checkpoint every this many steps.

save_checkpoints_steps: 5000

# (optional) How many checkpoints to keep on disk.

keep_checkpoint_max: 3

# (optional) Save summaries every this many steps.

save_summary_steps: 1000

# (optional) Train for this many steps. If not set, train forever.

train_steps: 2000000

# (optional) If true, makes a single pass over the training data (default: false).

single_pass: false

# (optional) The maximum length of feature sequences during training (default: None).

maximum_features_length: 150

# (optional) The maximum length of label sequences during training (default: None).

maximum_labels_length: 150

# (optional) The width of the length buckets to select batch candidates from (default: 5).

bucket_width: 5

# (optional) The number of threads to use for processing data in parallel (default: 4).

num_threads: 8

# (optional) The number of elements from which to sample during shuffling (default: 500000).

# Set 0 or null to disable shuffling, -1 to match the number of training examples.

sample_buffer_size: 500000

# (optional) The number of batches to prefetch asynchronously. If not set, use an

# automatically tuned value on TensorFlow 1.8+ and 1 on older versions. (default: null).

prefetch_buffer_size: null

# (optional) Number of checkpoints to average at the end of the training to the directory

# model_dir/avg (default: 0).

average_last_checkpoints: 8

# (optional) Evaluation options.

eval:

# (optional) The batch size to use (default: 32).

batch_size: 30

# (optional) The number of threads to use for processing data in parallel (default: 1).

num_threads: 2

# (optional) The number of batches to prefetch asynchronously (default: 1).

prefetch_buffer_size: 1

# (optional) Evaluate every this many seconds (default: 18000).

eval_delay: 7200

# (optional) Save evaluation predictions in model_dir/eval/.

save_eval_predictions: true

# (optional) Evalutator or list of evaluators that are called on the saved evaluation predictions.

# Available evaluators: BLEU, BLEU-detok, ROUGE

external_evaluators: BLEU

# (optional) Model exporter(s) to use during the training and evaluation loop:

# last, final, best, or null (default: last).

exporters: last

# (optional) Inference options.

infer:

# (optional) The batch size to use (default: 1).

batch_size: 10

# (optional) The number of threads to use for processing data in parallel (default: 1).

num_threads: 1

# (optional) The number of batches to prefetch asynchronously (default: 1).

prefetch_buffer_size: 1

# (optional) For compatible models, the number of hypotheses to output (default: 1).

n_best: 2

# (optional) Scoring options.

score:

# (optional) The batch size to use (default: 64).

batch_size: 32

# (optional) The number of threads to use for processing data in parallel (default: 1).

num_threads: 2

# (optional) The number of batches to prefetch asynchronously (default: 1).

prefetch_buffer_size: 1```

Please help me find out what I have missed.

Thanks,

Thomas

With Adam optimizer I also had some kind of problem, and this is why i stick with SGD. Can regularization cause this kind of problems?

With Adam optimizer I also had some kind of problem, and this is why i stick with SGD. Can regularization cause this kind of problems?