Hi all,

Currently, I am training models in two different systems using OpenNMT (lua torch). I know that OpenNMT development is not as active, but OpenNMT-py lacks target-side word features.

I have a desktop computer (i5 4690k, 16gb RAM, 2x1070 gtx) and a shared server ([Small edit: it has both cores] 2x E5-2603 v4, 64gb RAM, 5x 1080ti gtx). I trained the exact same model (English to Irish, 1m sentences, default parameters but for max sequence length, that is 80 instead of 50), and found that the desktop was much faster than the server (!).

For this experiment, the desktop was not running anything else. The server is shared, so the other 4 cards may or may have not been running at the same time.

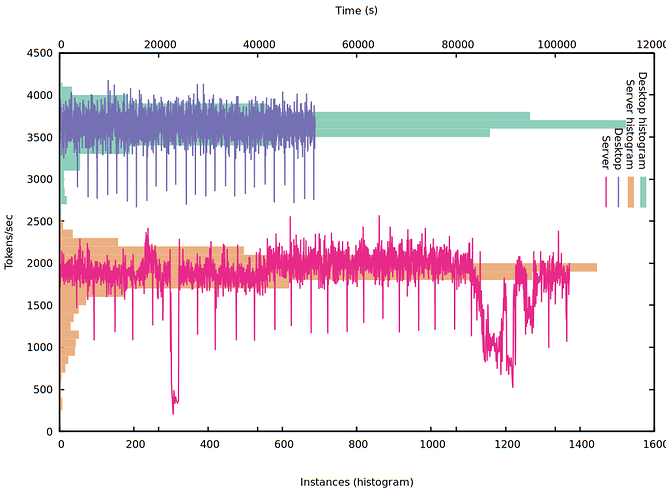

I plotted tokens/second for both system (both the exact measurements and a histogram with the amount of events in 100tok/sec bins):

So, even ignoring the ~25k sec and ~90k sec drops in the server (probably somebody else launched something CPU intensive), the performance of the 1080ti is around half the performance of the 1070 (what makes no sense at all).

I have the feeling that the CPU might not be powerful enough to handle all 5 GPU. Maybe it is a PCI lane issue, but I am a bit lost in this environment and I do not know how to properly debug it.

Did any of you experience a similar situation? Do you know of any specific tools that can help me find what is the issue with the server?

Thanks a lot.